Home > Graphics Settings

Choosing the Best Graphics Settings for PC Gaming (Low vs Medium vs High vs Ultra)

Last Updated: January 28, 2023

Whether you're planning a new gaming computer build or purchase and wondering what graphics settings are all about and which settings (low, medium, high or ultra) you should be looking at when studying performance benchmarks, or if you're simply looking to choose the best graphics settings to increase performance, in this beginner's guide to optimizing game settings we'll explain what you need to know.

Related Guides:

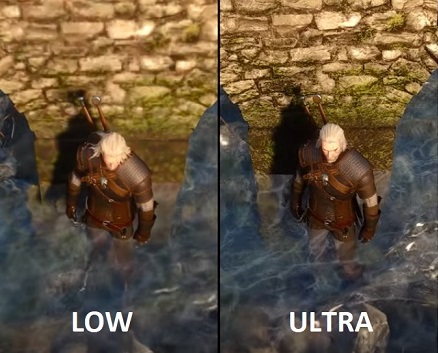

The Witcher 3: Low vs Ultra Settings Side by Side

If you're new to PC gaming, you may hear all this talk about PC graphics/game settings and "ultra" settings and whatever else, but may wonder what exactly are PC graphics settings, why do they matter, and what you should know when planning and building a PC. When you game on consoles, everybody has the same hardware and so everyone is going to see the same quality of graphics on-screen. Whereas with PC games, the spectrum of possible hardware that a player may be using is vast.

One PC gamer may be playing a certain game a high-end PC that'll run the game and all its special graphics effects without a hiccup, whilst another player may be sporting a cheap potato PC of sorts (ie low spec) that struggles to keep up and run the game smoothly. That's where game settings come in: unlike console games that have fixed graphics options for the most part, for PC game developers open up a whole host of different graphics options for players to be able to adjust and tweak in order to optimize/improve performance.

The graphics settings menu of Warcraft 3

The graphics settings menu of Warcraft 3The catch? When you lower settings, the graphics quality and effects won't be as good, but this is the sacrifice you have to make if you want better performance in a certain game that is struggling on your PC. If your computer can't run a certain game at its highest settings, you'll want to strike a nice balance of graphics quality and performance until you're happy with the frame rate and performance you get.

This balance varies from gamer to gamer, as some will want flawlessly smooth gameplay at all games, whereas others may not mind the occasional slow down here or there in the name of the highest quality graphics possible. It all comes down to you and what frame rate you think is best, the type of game you're playing (frame-rate slowdowns are less fun in fast-paced games where every millisecond counts; think Counter Strike or Overwatch).

So, if you really are new to the wonderful world of PC gaming, you may be wondering how do you lower or increase graphics settings? Simply put, to meddle with the settings of a game simply look for a section in the main menu called something like graphics options or video options.

Many games will have various presets of settings such as Low, Medium, High, or Ultra presets, and as well as being able to select a preset (which selects a default range of settings for you: more in this below) you should also have the option to go in and change individual graphics settings for those who wish to customize further (you may have to select the "custom" preset to be able to do this as shown below).

Selecting a preset will automatically set all the graphics settings to a certain level

Low vs Medium vs High vs Ultra Settings

What are presets? As mentioned above, most PC games will have a list of available settings presets which are default settings for various levels of graphics quality that are pre-set by the game's developers. They make changing settings of a game easy, as you don't have to go in and tweak individual settings (which can be confusing if you don't know what all the settings do).

Most games will automatically set a certain preset for you based on which hardware the game detects that you have, but you may want to change the preset if you either want better performance (eg change it from high to medium) or better visual quality (eg change it from high to ultra). The higher the preset, the more demanding the game will be on your hardware (and therefore the lower your frame rate will be).

In most games there's usually 4, sometimes 3, default presets which are usually named something along the lines of the following:

- Low - When you select low settings, or specifically when you select the low preset, the game will display the lowest possible graphics settings across the board. Running a game at low settings is helpful if you want the highest frame rates possible if you either have a weak system or if you want to get extra high frame rates for 144Hz monitors.

- Medium - A medium preset improves the graphics quality slightly over low settings at the expense of slightly lower frame rates. This might be all your system can handle depending on your PC, resolution, and the game you're running.

- High - Nearing the maximum visual quality of the game's graphics/effects at the further expensive of lower performance. How much lower your FPS will be compared to medium/low will depend on the game. But also, depending on the game, you might not even notice much of a difference between high and ultra settings.

- Ultra - Most games will call the maximum preset as "ultra" settings, but some may have it named "extreme", "ultra high" or "very high" instead. The ultra preset will, you guessed it, crank up all the graphics settings to the max, hence the term "maxed out" that gamers use. Although, keep in mind that even if you select the default ultra/maximum preset, that doesn't necessarily mean that your game is set to the highest possible graphics available, as there may be other specific graphic options that are not enabled when you select an ultra preset (such as Anti Aliasing or ray-tracing for example).

Differences in preset settings for Witcher 3

Are Ultra Settings Worth It?

Are ultra settings a must-have for PC gaming? How much better are they than high, or even medium settings/presets? Is it a waste of a game if you're NOT playing on ultra settings? Of course the answer to that last one is no - you don't NEED to be running maxed-out ultra settings to fully enjoy a game.

But whether or not ultra settings are worth it, and how much of a difference they'll make on graphics and the overall experience, will vary from game to game, and from gamer to gamer. Some games will be more noticeable on maximum settings compared to high or medium graphics settings, whereas in other titles you may not even notice the difference.

Although keep in mind that generally speaking, the higher the settings the less of a difference you'll see. Meaning, that a jump from low to medium, or medium to high, is likely going to have more of a change than going from say high to ultra/maxed. So I'd say that playing on high vs ultra isn't too much of a difference in most games, and most gamers won't really notice much of a difference.

Another factor in whether ultra settings are worth aiming for, besides whether or not your PC can produce a good enough frame rate on ultra settings, is how much of a graphics nut you are and how much attention to detail you, well, pay attention to. The type of game you're playing also matters. In a fast-paced shooter or action game for instance, you're probably not taking too much time to stop and smell the roses and just stare at the pretty graphics.

Stare at something for a millisecond too long in CSGO and you're dead. But in slower paced games that put more of a focus on amazing visuals, effects, and attention to detail - think Witcher 3 or the Crysis series as cliche examples - running the highest graphics settings you can is more important. Running Witcher 3 on PC on low settings is a bit of a waste of an epic experience IMO, and you know the developers are hurting inside when you do that.

I'd say having higher settings is more important, and more noticeable, when playing immersive, atmospheric games like The Witcher 3 where there's plenty of time to soak in views like this

Finding Optimal Custom Settings for Max Performance

If you want to spend more time changing graphics settings around to find the optimal balance of performance and graphics quality for your particular system and game, you can go beyond merely changing the default presets to manually tweaking the individual, specific graphics settings. Sometimes a certain preset may not be optimally set for maximum performance and you'll want to use a custom preset instead.

Related: How to See FPS In-Game

For example, if you have a game set on a high preset, which probably sets all of the game's graphics settings to high (not necessarily though as some developers will tweak presets more precisely), there may be certain settings you'll want to have to ultra instead (settings that don't affect FPS too much) and other specific settings you may wish to lower: settings that are perhaps most taxing on FPS/frame-rate, or maybe settings that are most CPU intensive in the case that your setup's weaker link is your gaming processor.

When it comes to manually changing PC graphics settings for optimal performance, every game will be slightly different and it's going to take a bit of trial and error to find the best settings. There are plenty of guides online on tweaking settings for a particular game, such as our own settings guide for Cyberpunk 2077. Check out the Digital Foundry YouTube channel for some great videos on game settings.

Trusted VPN

VPN software can be important in this day and age, especially if you do lots of online banking and/or use public WiFi whilst travelling. Having a VPN adds an extra layer of security to your PC or laptop when online to help protect your data, passwords, financials, etc from hackers or malicious programs. It can also let you access region-locked content (eg US Netflix from overseas). For gamers their can be even more benefits to using a VPN.

Because they're so popular these days, there are countless VPN providers, and it can be confusing to pick one. If you want my 2 cents, after a lot of research I decided on NordVPN 'cause it's one of the fastest, most reliable VPNs for both gaming and general use, with a lot of credible reviews out there backing that up. They also quite often run very solid deals.

Popular Articles (see all)

Search the Site

About the Author (2025 Update)

I'm an indie game developer currently very deep in development on my first public release, a highly-immersive VR spy shooter set in a realistic near-future releasing on Steam when it's ready. The game is partly inspired by some of my favorites of all time including Perfect Dark, MGS1 and 2, HL2, Splinter Cell, KOTOR, and Deus Ex (also movies like SW1-6, The Matrix, Bladerunner, and 5th Element).

Researching, writing, and periodically updating this site helps a little with self-funding the game as I earn a few dollars here and there from Amazon's affiliate program (if you click an Amazon link on this site and buy something, I get a tiny cut of the total sale, at no extra cost to you).

Hope the site helps save you money or frustration when building a PC, and if you want to support the countless hours gone into creating and fine-tuning the many guides and tutorials on the site, besides using my Amazon links if purchasing something, sharing an article on socials or Reddit does help and is much appreciated.